Yigit Bezek

I build things. Mostly AI systems and full stack apps that actually ship.

BSc Creative Technology from UT. Applying to MSc Data Science and AI now. I work across the stack from Arduino firmware to Cloudflare Workers and I like projects that have to survive contact with real users.

LeadOrch

Live B2B lead gen SaaS that takes a company name and a use case and gives you 4 verified, scored, personalised outreach drafts. A 15 stage AI pipeline runs on Cloudflare Workflows and talks to 11 external APIs per run. Live at leadorch.io.

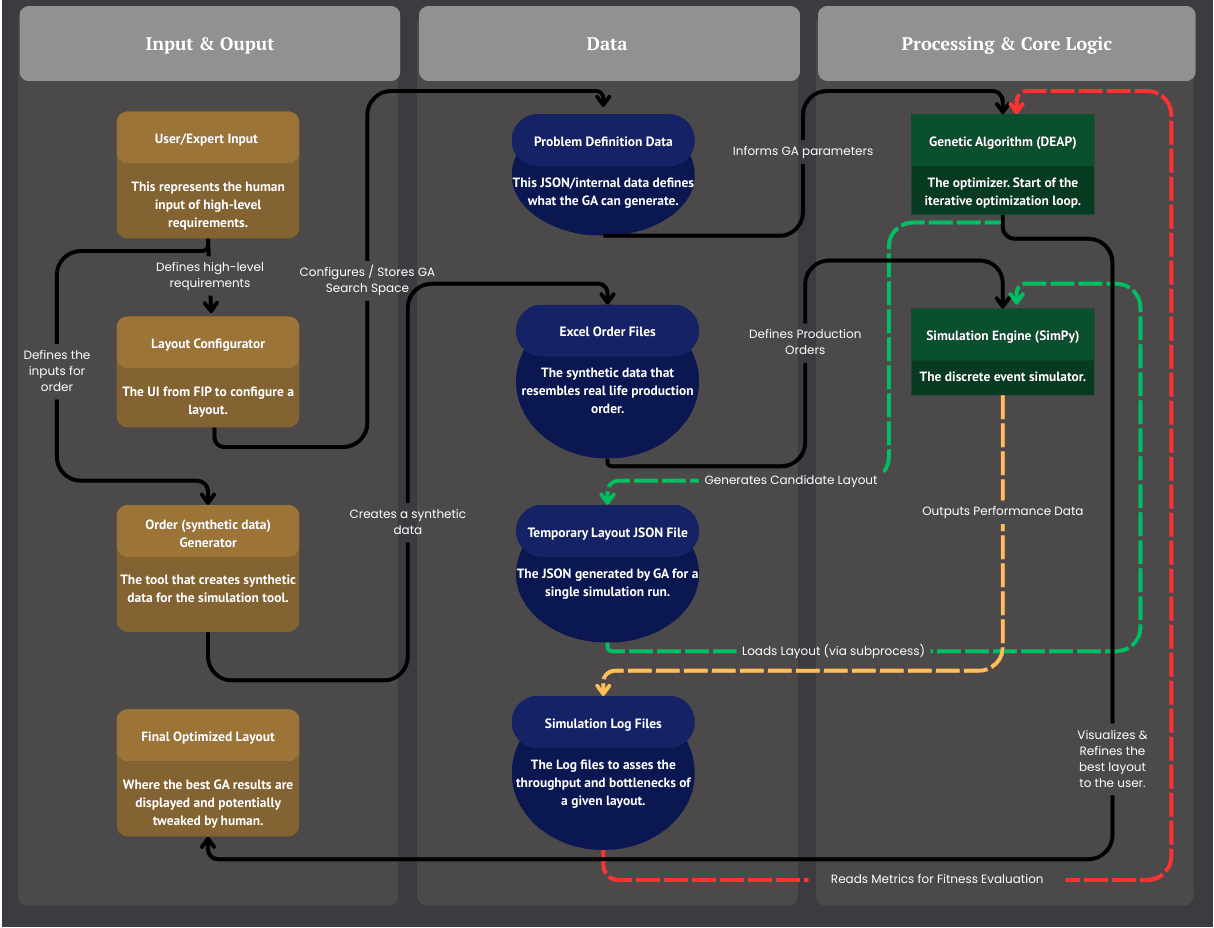

UT Creative Technology BSc graduation project for Van den Bos CM, a Dutch corrugated cardboard transport system manufacturer. A hybrid NSGA-II genetic algorithm with a two tier evaluator (millisecond surrogate plus SimPy DES) optimises factory layouts on conveyor length and routing efficiency, automating a workflow that currently takes weeks of manual back and forth.

- Process level gene encoding collapses the search space from about 10 to the 90 down to about 5 to the 12 by encoding high level design decisions (machine_order, wip_type, corridor_slice, turntable_policy, speed_tier) in a 12 integer chromosome

- Two tier evaluation pipeline. The surrogate evaluator runs all 40 candidates per generation in milliseconds and only the top 20 percent (8 individuals) go into a full SimPy DES run. Total budget 160 SimPy runs over 20 generations instead of 800

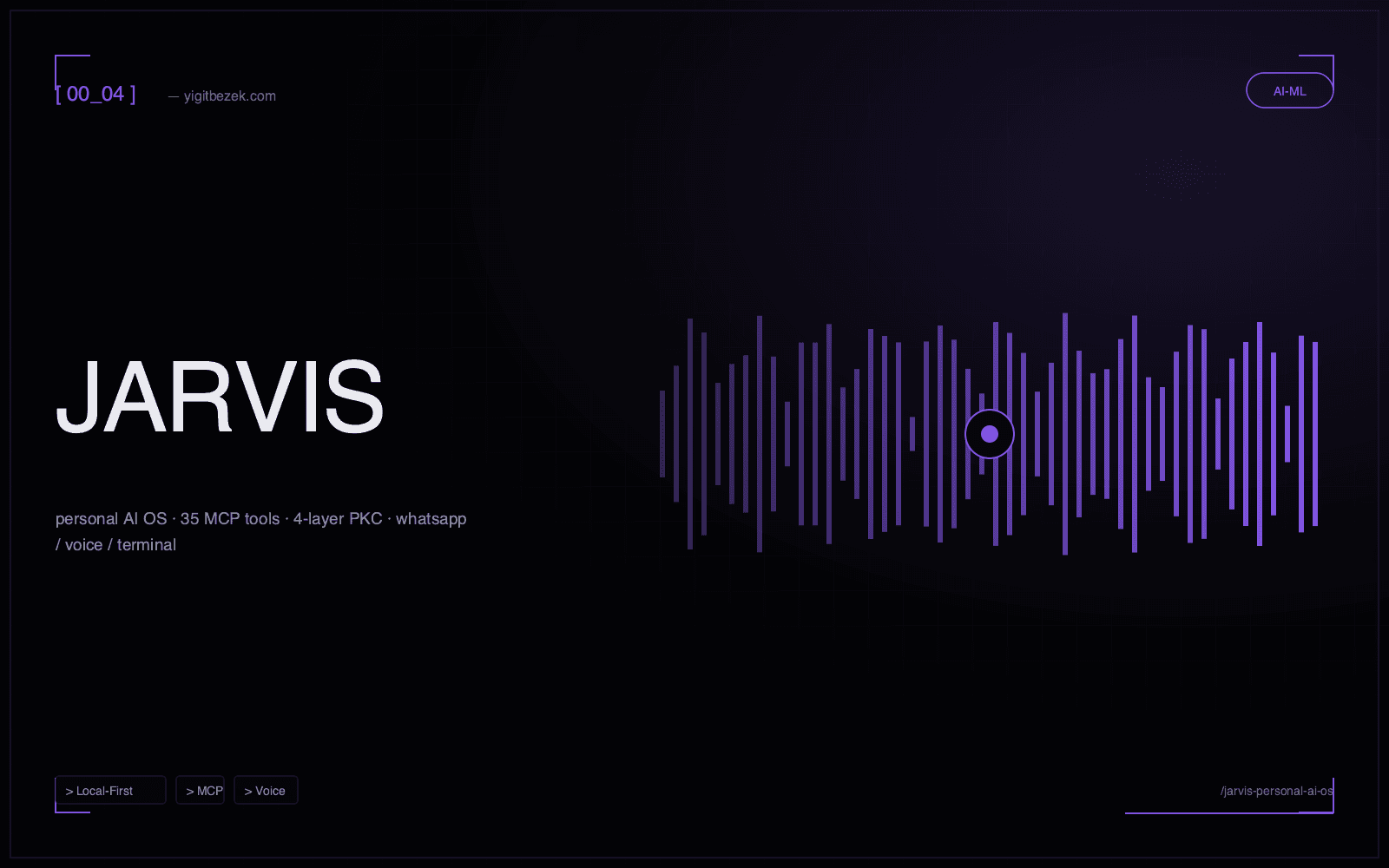

Local first personal AI operating system. 11 Python packages, 35 MCP tools, a daemon with 12 scheduled jobs, a 4 layer Personal Knowledge Core, policy based LLM routing across 6 task classes, and cross channel reach over WhatsApp, Telegram, email, calendar and voice. All of it runs on Ollama on an Apple M3.

- 35 MCP tools registered with Claude Code via 'claude mcp add jarvis'. IDE sessions get jarvis_search, jarvis_context, jarvis_memory_query, jarvis_mail, jarvis_calendar, jarvis_say, jarvis_bugpatrol, jarvis_news, jarvis_jobs, jarvis_build and more

- 4 layer Personal Knowledge Core (Identity, Domain, Operational, Episodic) on SQLite plus FTS5 with confidence gated writes. Identity needs 0.7, domain entries 0.6, episodes are append only from any source

A Tauri 2 desktop app for writing dense LLM agent prompts, annotating them with comments and reference images, and shipping the result straight into a Claude Code terminal session. Pairs with a stdio MCP server so a different Claude Code session can read every plan and annotation on demand.

- Tauri 2 app with a Rust command surface that enumerates live tabs across four terminal apps (iTerm2, Terminal, Ghostty, VS Code) using per app AppleScript dialects

- send_to_terminal handles text and images. Text via pbcopy plus AppleScript paste, images via clipboard «class PNGf» plus Cmd+V, non images via typed @filepath references

End to end LinkedIn job pipeline that scrapes postings, enriches them through headless Chrome, scores them with Claude, writes a per application PDF cover letter, then auto fills external ATS forms with a 3 tier hybrid system. DOM parsing first, API interception for React SPAs, Claude Vision as a last resort.

- 3 tier hybrid auto apply. DOM parsing, API interception (for React SPAs), Claude Vision fallback. Tiers 1 and 2 cost $0 per application, tier 3 about $0.50

- 82% success rate (9 of 11) on a March 2026 batch test across 11 ATS platforms (Greenhouse, Lever, Ashby, Breezy, Teamtailor, Personio, SmartRecruiters)

AI powered auto approval guardian for Claude Code that evaluates tool use requests in real time. Sentinel intercepts the JSON-RPC permission prompts, applies configurable risk heuristics and auto approves the low risk stuff, so I stay in flow without losing control of anything dangerous.

- Intercepts every tool-use permission Claude Code emits over JSON-RPC and decides in milliseconds

- Layered policy engine: file-path allowlists, command-pattern matching, token-budget guards

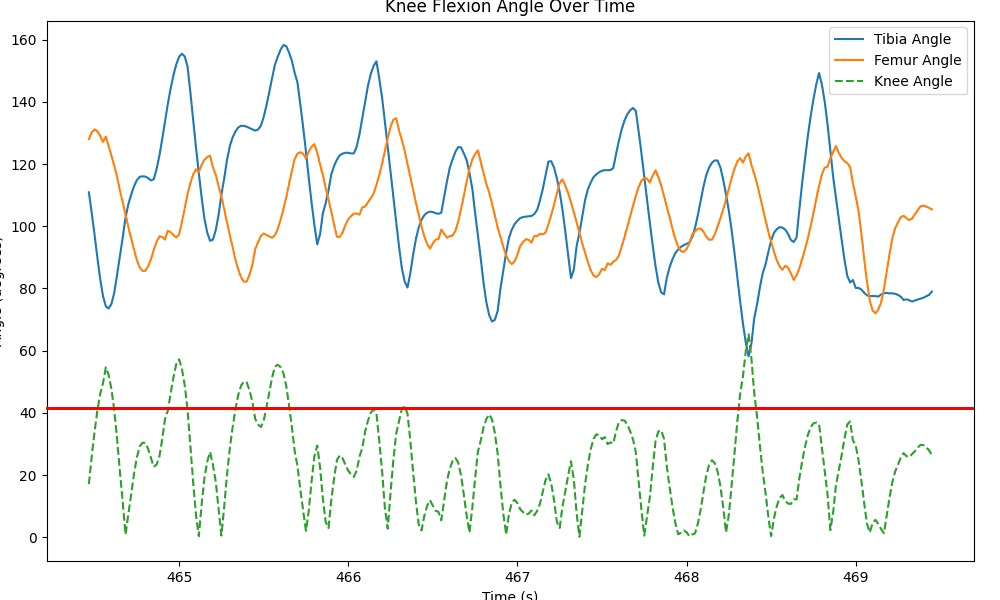

A treadmill facing ambient feedback system that watches a runner's knee flexion and gait rhythm in real time through two body worn IMUs (one on the tibia, one on the femur) and fades a big screen from green to yellow to red as form degrades. Good form keeps it green. A collapsing knee angle or rhythm drift turns it red with a gentle game over.

- Live knee flexion signal from two body worn IMUs at 100 Hz, fused through a complementary filter and streamed into a matplotlib live plot

- Concept sketched May 2024, active prototype January 2026. End to end data pipeline verified with sit to stand and running gait captures at the UT DesignLab

Multi game social platform for Texas Hold'em, Omaha and Short Deck with real time multiplayer over websockets. ELO based matchmaking, animated chip physics, in game chat and a full tournament bracket system, all in a responsive React UI.

- Sub-50ms event broadcast via Socket.IO across every seat at the table

- Hand evaluation via lookup-table algorithm — O(1) at showdown, no runtime iteration

Early stage B2B sustainability concept I scoped and submitted to the ELIAS European AI funding programme. EcoSynergy was framed as an AI driven ESG analytics product around satellite imagery, IoT energy data and climate datasets. This is a business development and funding pitch artefact, not a shipped platform, kept on the site as honest evidence of entrepreneurial work.

- Submitted as a formal funding pitch to the ELIAS European AI programme

- Scoped a satellite-NDVI + smart-meter + weather-forecast pipeline for SME ESG reporting

Git repository scanner and analytics dashboard that visualises commit frequency, contributor velocity, code churn hotspots and language breakdowns across all your repos. Talks to the GitHub API and renders rich filterable charts in a responsive React interface.

- Ingests repo metadata from GitHub REST + GraphQL into a local SQLite snapshot

- Commit heat calendars, per-author line-impact graphs and automated code-health scores

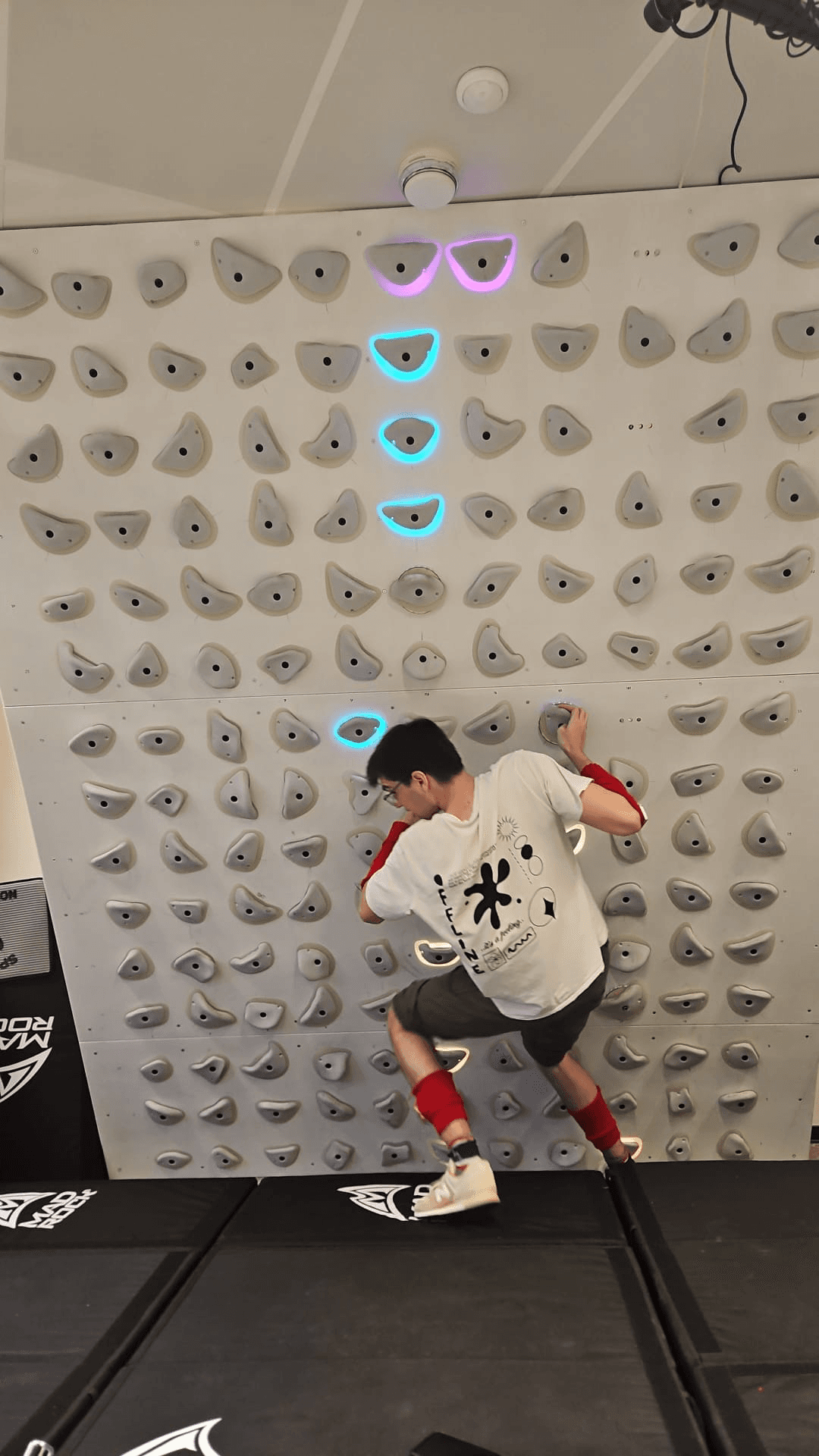

A 6 person HiFi HCI build. A wearable haptic coach for boulderers. A laptop watches the wall and the climber over a webcam, infers body pose and weight distribution, and fires a vibration on the exact limb that should move next across four ESP8266 armbands and legbands. It deliberately hints instead of solving.

- Demoed live on a lit hold bouldering wall at a Mad Rock climbing gym with a four wearable haptic coach

- Distributed six device network. Laptop plus ESP32 master plus four Wemos D1 mini (ESP8266) ESP-NOW slaves on arms and legs

Real time game state visualisation and overlay rendering on a transparent always on top window. Combines public API ingestion with memory mapped game state reads, computer vision on the rendered minimap and low latency UI sync. 60 fps with zero input lag impact and zero modification of the host client.

- Transparent click-through Electron overlay pinned at 60 fps with zero input-lag impact

- C# companion does memory-mapped game-state reads for sub-frame event timing the public API can't expose

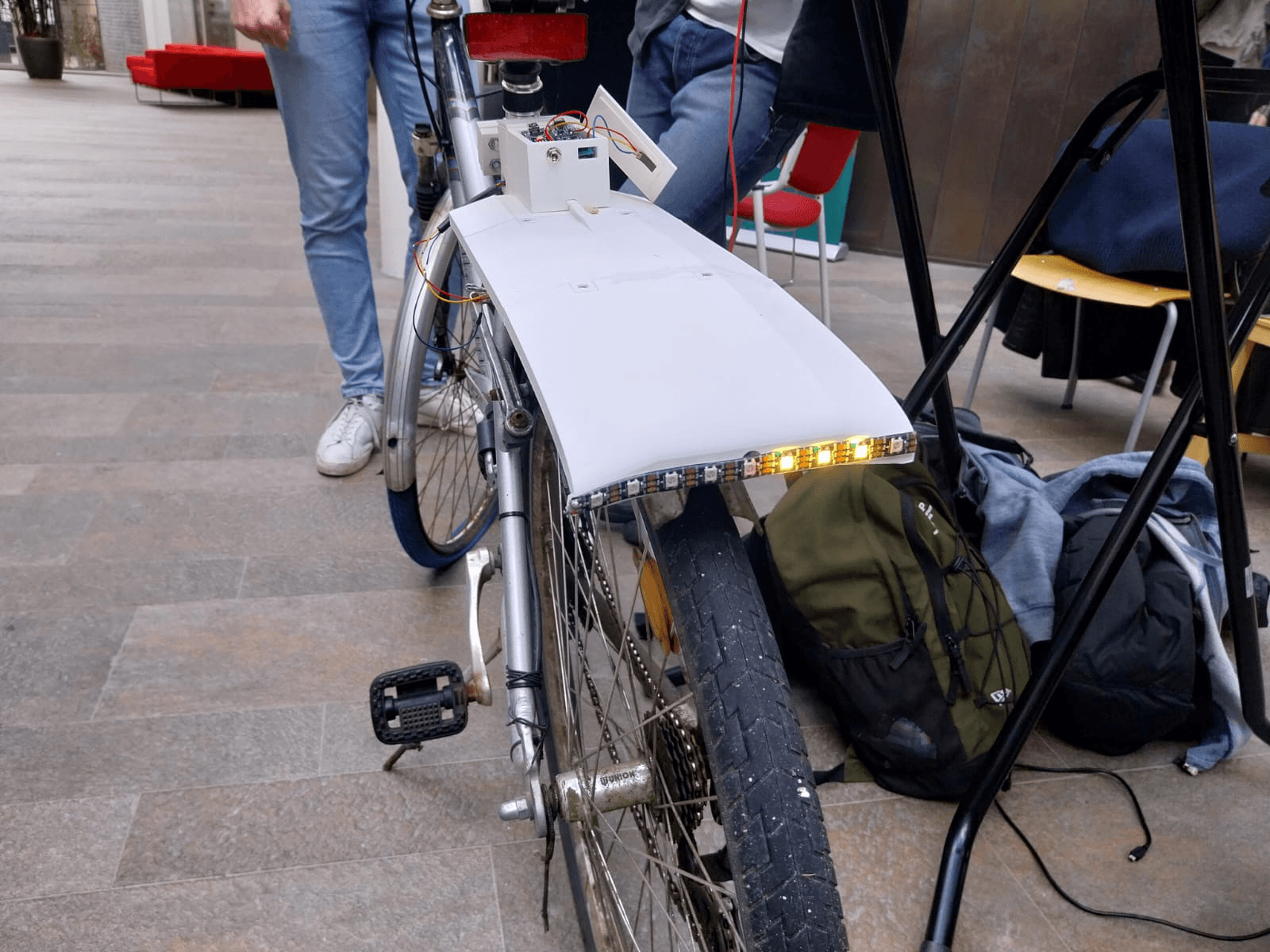

A 7 person portable anti theft smart bike lamp. The expensive brain (MCU, Li-Po, addressable LED strip, IMU) lives in a detachable module that slides onto a magnet plus pogo pin rail on a 3D printed fender. So a passing thief can only walk off with the cheap passive mount. Car like turn indicators and a brake light that intensifies on deceleration, all driven from three handlebar switches.

- Portable anti theft design. MCU, Li-Po, LED strip and IMU all live in a detachable brain that slides onto a magnet plus pogo pin rail. Only the cheap passive mount stays on the bike

- Car like signaling. Left and right NeoPixel blinkers, full strip red brake flash held 3 s, dim red idle pattern. All driven from three handlebar switches (LEFT=9, RIGHT=3, BRAKE=5)

Two IMU wearable that streams accelerometer and gyroscope data from sensors strapped to the tibia and femur, fuses them through a complementary filter and plots the live knee flexion angle plus sit and stand transitions in Python. Built as the Module 8 Biomedical Signals and Systems project.

- Live knee flexion angle from two body mounted IMUs at 100 Hz with a 5 second rolling display

- Three fusion strategies kept side by side (acc only, gyro only, complementary) for direct comparison

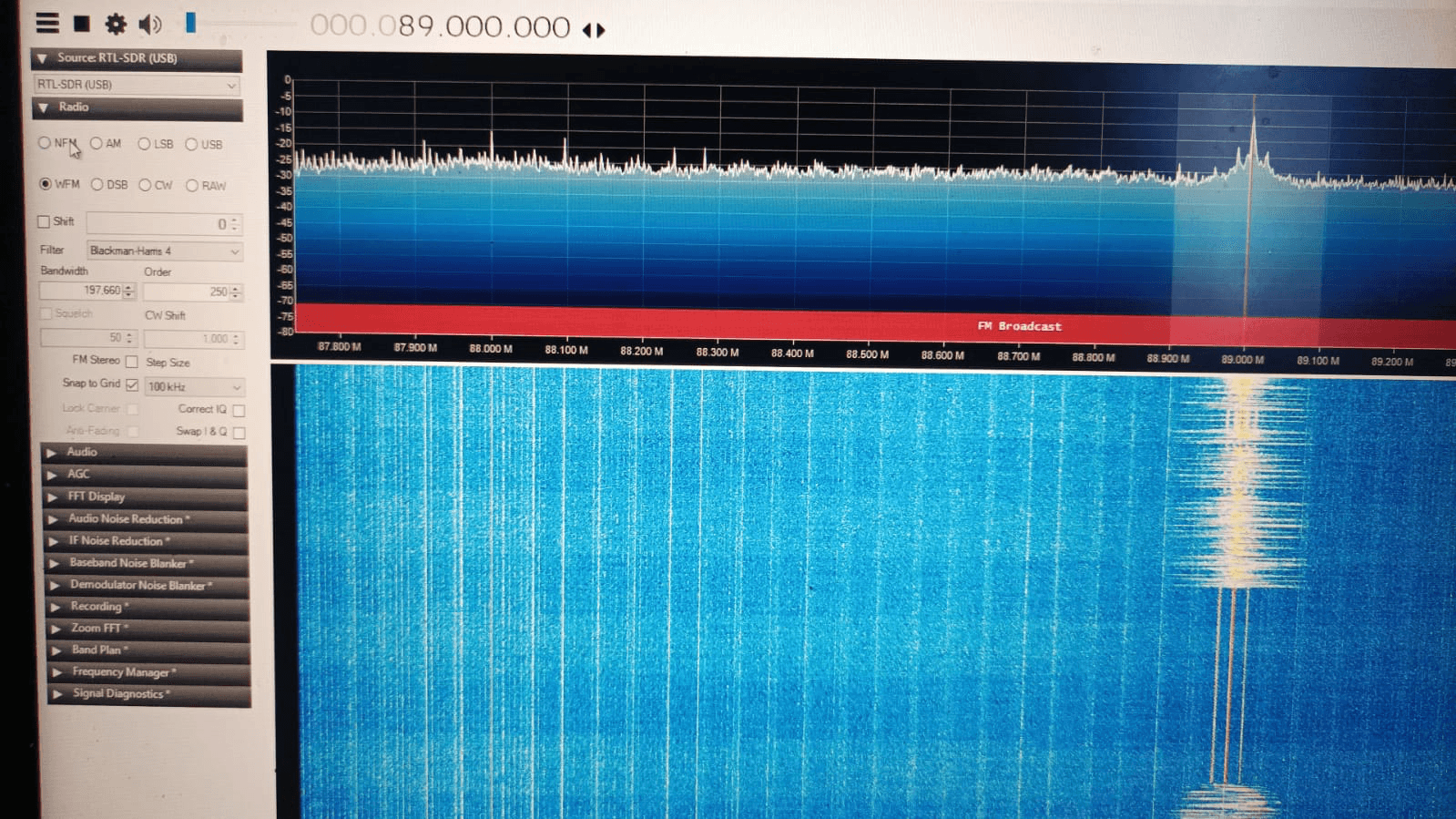

A personal software defined radio bench built on an RTL-SDR dongle (later HackRF One plus dual way transceivers) and a Kali / Pentoo Linux toolchain. Tunes FM broadcast, decodes amateur radio SSTV in Robot, Scottie, Martin and SC2 modes, tracks aircraft overhead via ADS-B, and followed open band HF and VHF during active Russia Ukraine coverage in 2023.

- Multi platform SDR bench. RTL-SDR plus HackRF One plus dual way transceivers on Kali and Pentoo Linux

- Documented FM broadcast capture in SDR# (89.0 MHz, Blackman-Harris-4 filter, clean carrier in the waterfall)

Contract project for a Turkish music producer. The team stood up its own GSM base station using full duplex SDRs and programmable SIM cards, spun up effectively unlimited phone numbers on demand, and drove a human looking Android automation farm through Android Studio plus Appium to seed engagement across Spotify, YouTube and Instagram. Framed honestly. What the tech actually does, not the marketing wrapper.

- Built a private GSM operator from full duplex SDRs plus programmable SIM cards. An effectively unlimited pool of real phone numbers for account creation and SMS verification

- Android Studio plus Appium farm driving dozens of phones through deliberately human paced interaction loops (scroll, watch, like, comment, sleep) instead of brute force actions

A 3D first person zombie shooter built in Ursina where you aim with your hand through webcam instead of a mouse. A cvzone hand detector tracks the index fingertip, raising it reloads and lowering it fires, and a Panda3D collision ray turns the screen space cursor into a 3D world hit. Year 2 Module 6 AI and Programming final.

- Aim with your hand. cvzone HandDetector tracks landmark 5, mapped from a hand calibrated camera pixel window into Ursina UI coordinates

- Shoot and reload by raising and lowering the index finger. The gesture is the trigger, no mouse or keyboard

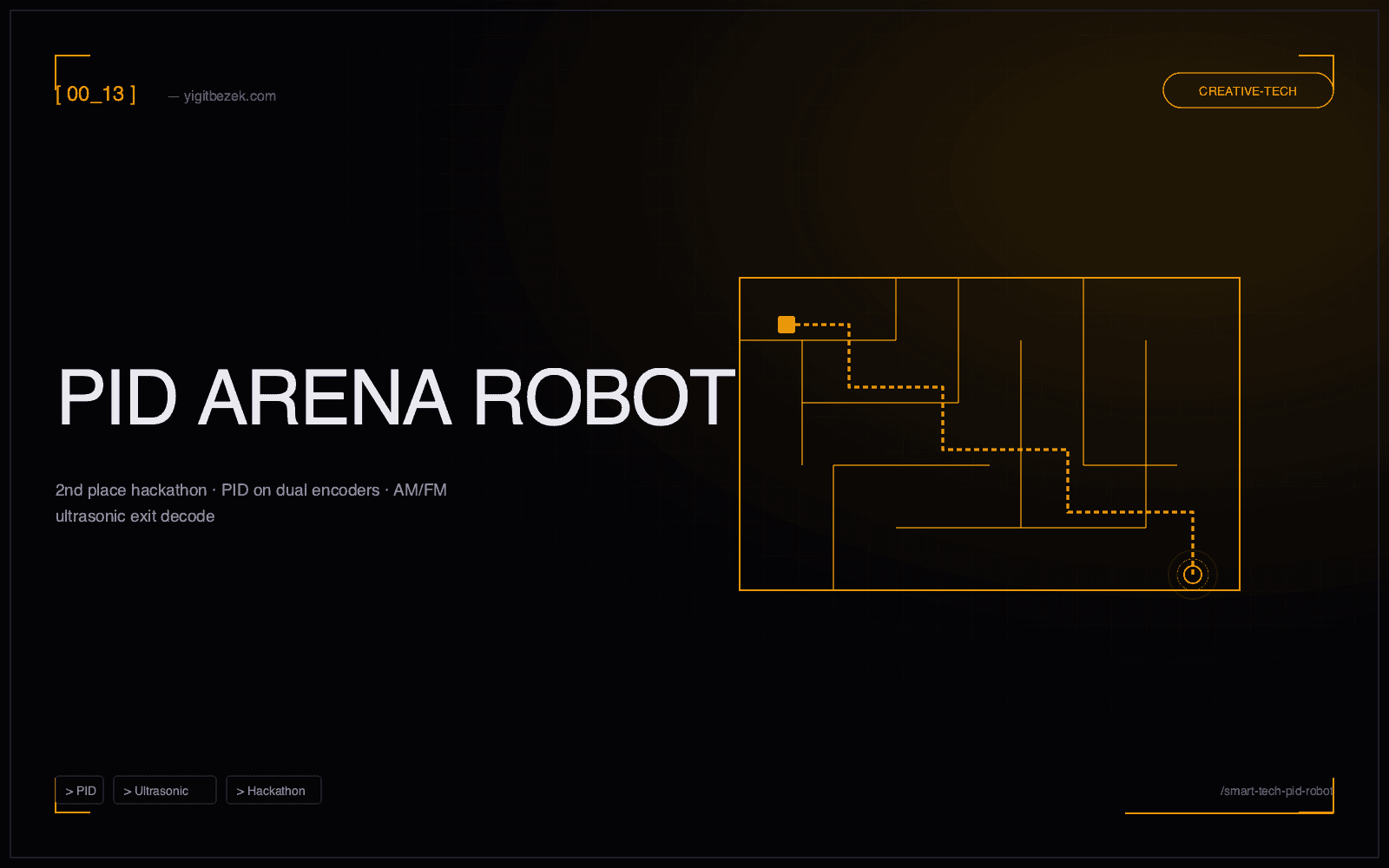

An Arduino controlled two wheel arena robot built for the UT Smart Technology hackathon. Won 2nd place. Closed loop PID on dual encoded DC motors, an IR beacon pair for arena detection, ultrasonic plus IR obstacle avoidance and AM/FM modulated ultrasonic decoding to pick the correct maze exit among several alternatives. All on the Arduino Motor Shield with an L298 H-bridge.

- 2nd place at the UT Smart Technology hackathon. Multi exit arena, not a single path maze

- On robot AM/FM demodulation of an ultrasonic audio beacon to decode the correct exit signal among several candidates

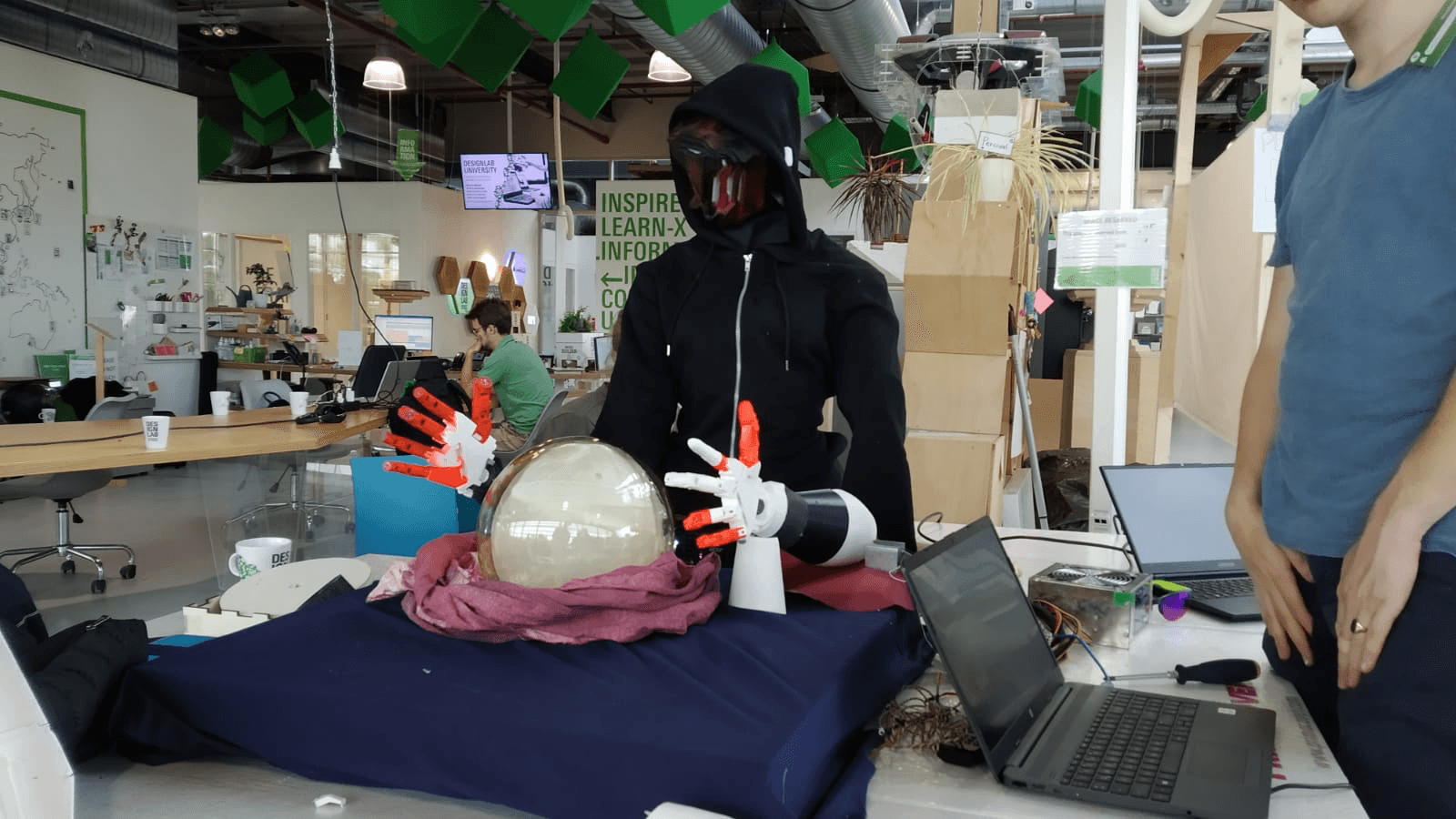

A theatrical interactive installation deployed at the UT DesignLab. A hooded performer in a 3D printed sci fi mask with articulated InMoov style robot hands sits behind a crystal ball, reacts to visitors through a Kinect fed depth and skeletal tracking pipeline, and fires a state machine driven narrative through the hands and the light on the ball. 1 to 3 minutes of average interaction, about 40 percent come back for a second fortune.

- Deployed interactively at the UT DesignLab with dozens to hundreds of visitors per run

- Kinect based depth and skeletal tracking feeds a rule based state machine that drives the costume's robot hands and narrative beats in real time

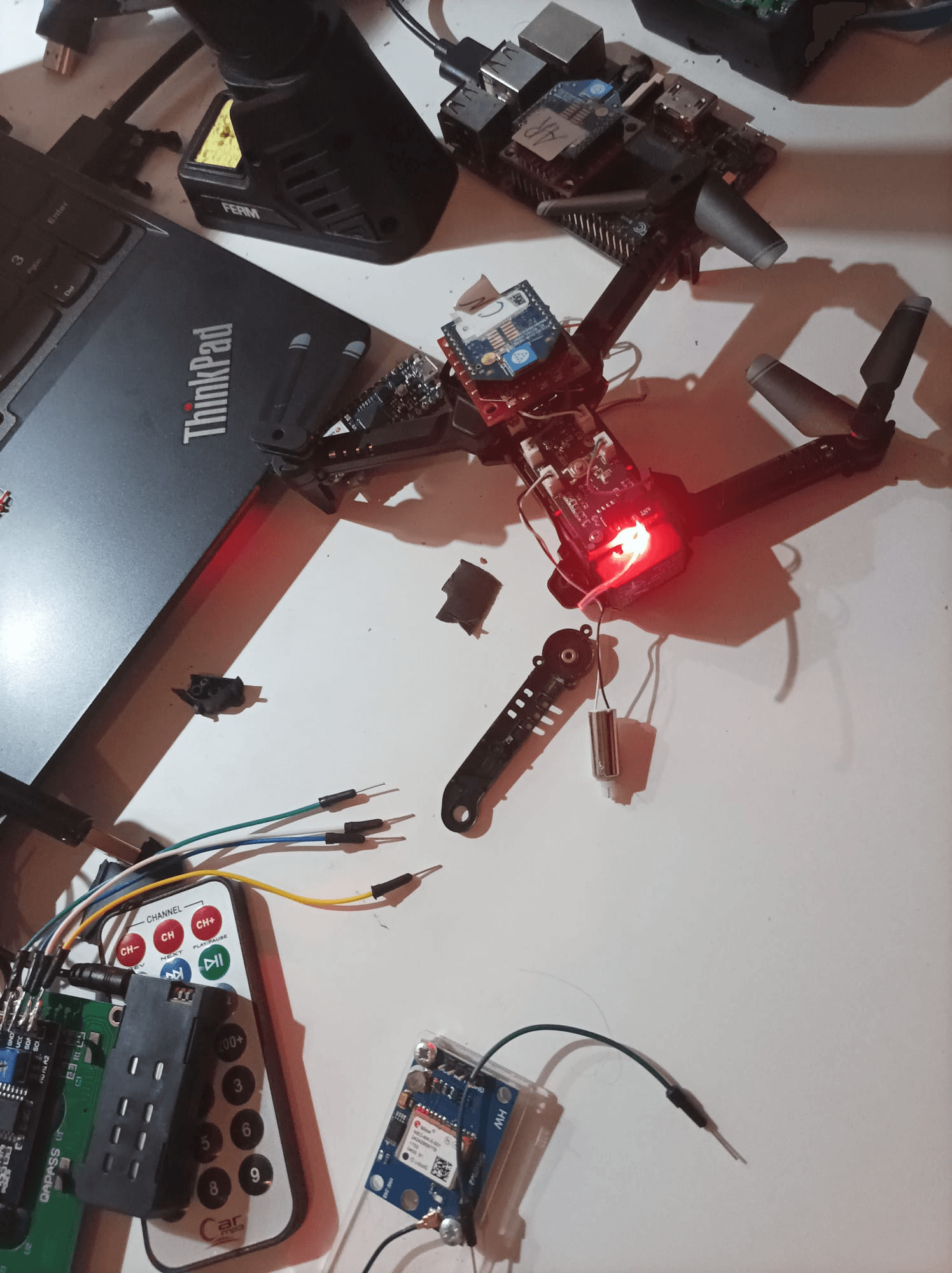

A custom frame quadcopter built to carry a Kinect for IR road surface scanning and a signal jammer payload, driven by a Pixhawk class flight controller with a Raspberry Pi ROS companion computer. Solo hobby build. I redesigned the chassis for weight distribution around the centre of lift, upgraded rotor torque for load capacity, and re tuned the FC so it flew stably with the full payload on board.

- Custom frame quadcopter carrying a Pixhawk FC plus a Raspberry Pi ROS companion plus GPS plus ultrasonic in one integrated build

- Redesigned chassis for weight distribution around the centre of lift to carry a Kinect depth sensor plus signal jammer payload

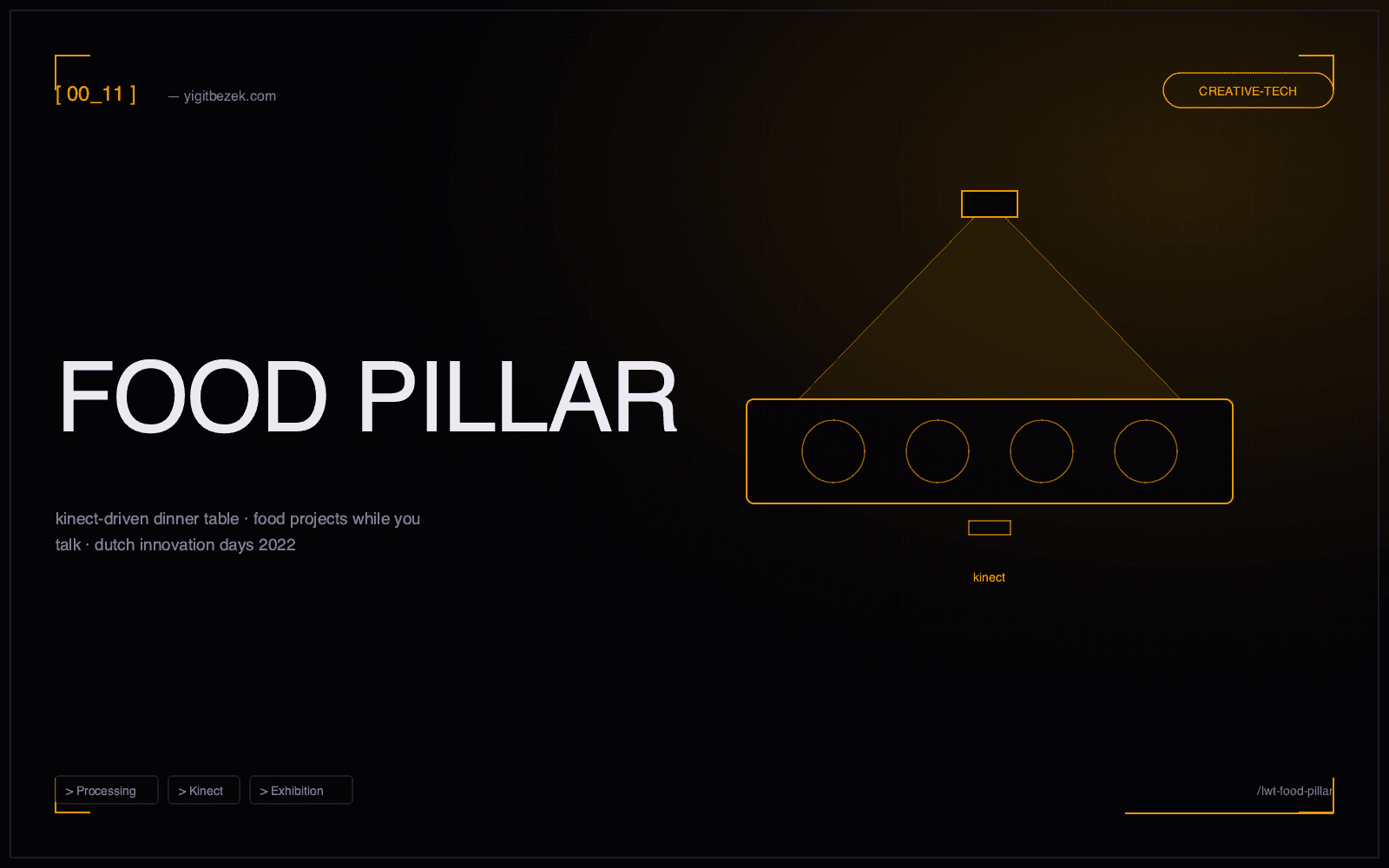

A 5 person interactive dinner table installation exhibited at Dutch Innovation Days 2022 in Enschede. A Kinect hidden inside the table watches whether visitors look down at a fake phone screen or up at each other, and a ceiling projector only paints food onto their plates while they keep talking.

- Exhibited publicly at Dutch Innovation Days 2022 in Enschede across a 5 day run

- Inverts the usual interaction model. The projector only renders food while visitors are NOT looking at a screen

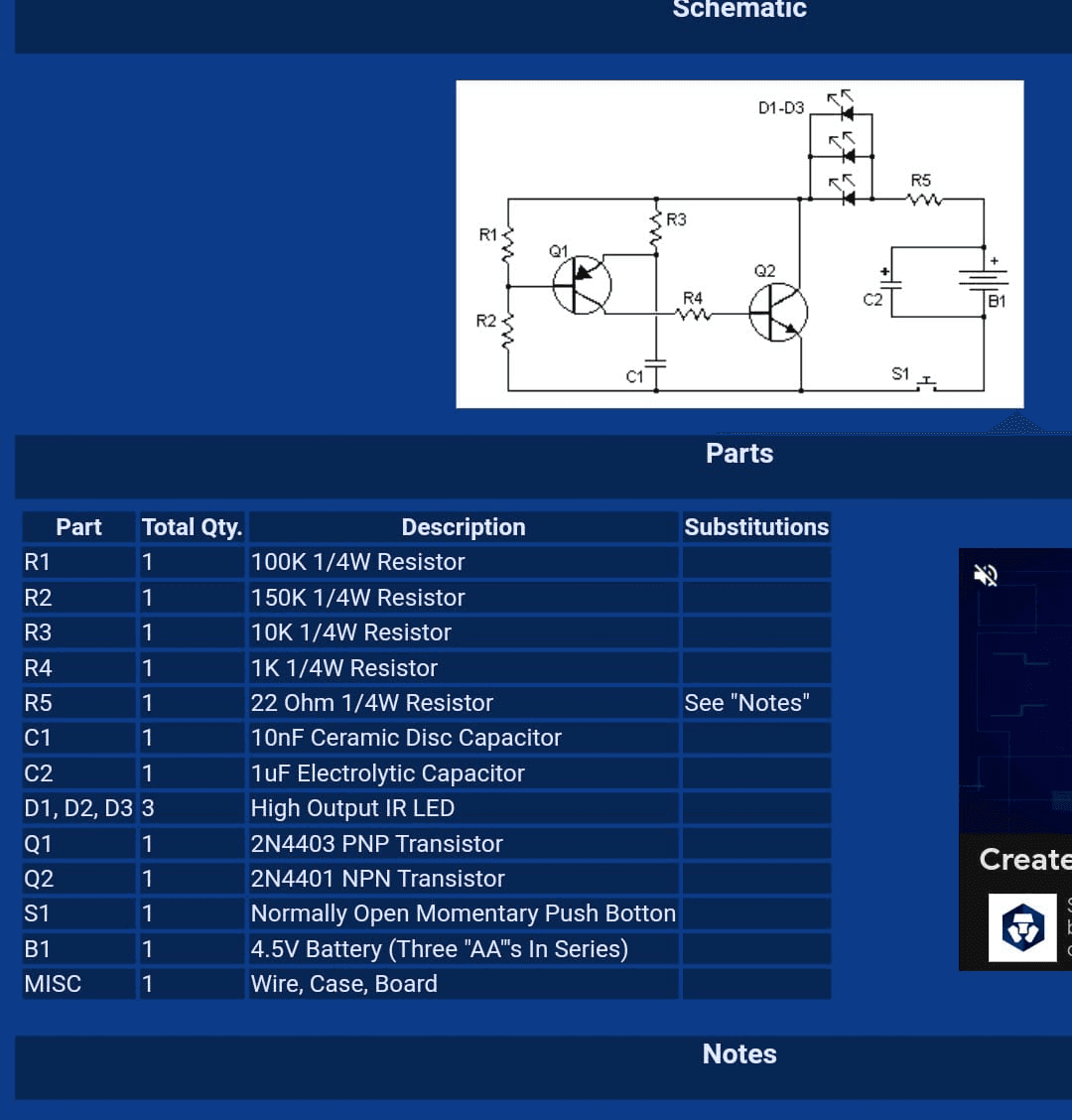

A self built transistor pair astable multivibrator driving three high output IR LEDs fast enough to swamp IR remote receivers at short range. A classic electronics learning exercise in oscillator design, with every R and C value calculated against the multivibrator frequency math. Disables TV remotes and IR motion sensors. Does not touch WiFi, Bluetooth or cellular.

- Transistor pair astable multivibrator (2N4403 PNP plus 2N4401 NPN) driving three high output IR LEDs into the IR remote receive band

- Every R and C value calculated against the multivibrator frequency math rather than copied blindly. The point of the exercise was the oscillator theory, not the jammer

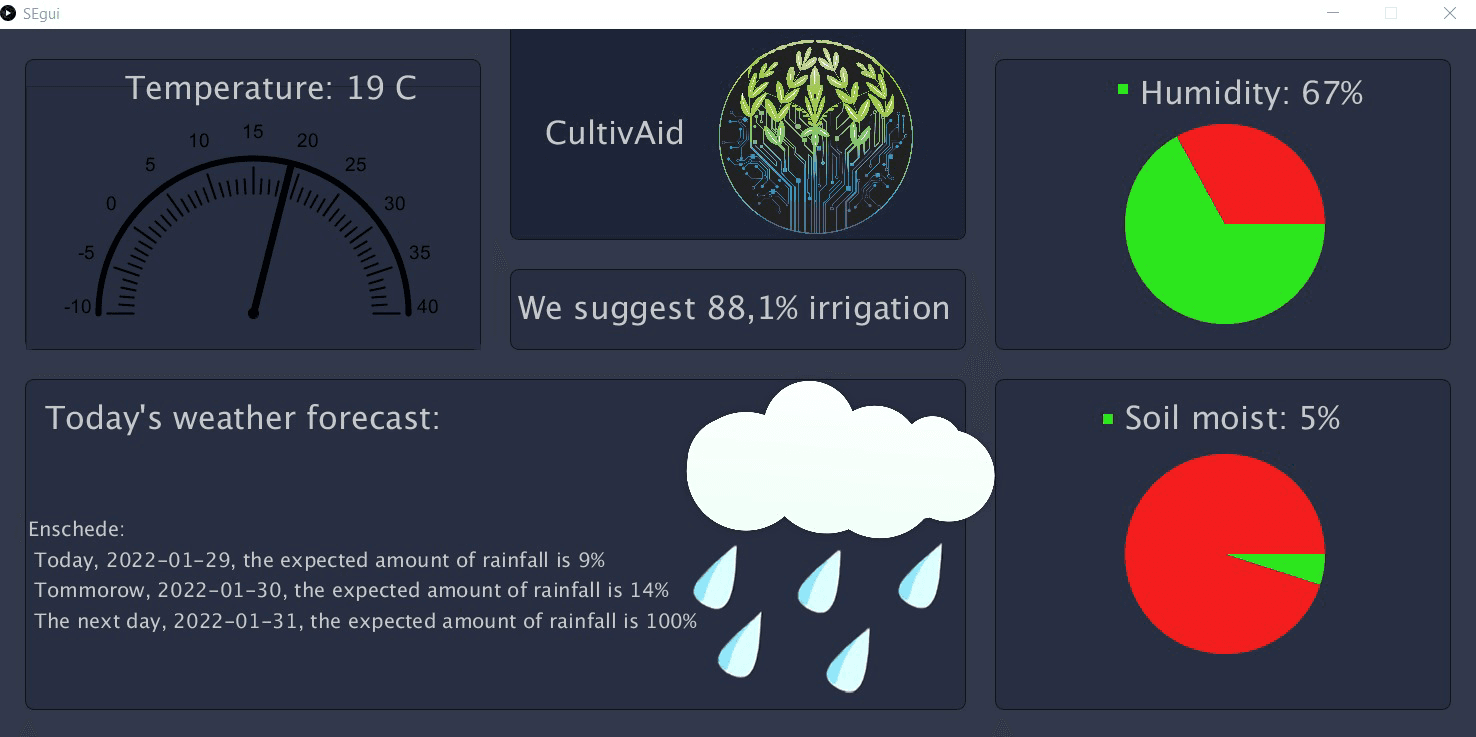

A Y1M2 Smart Environment project. An Arduino plus Grove sensor rig inside a potted basil plant feeds live temperature, humidity and soil moisture readings into a Processing desktop dashboard. The GUI fuses the live sensor stream with a 3 day Enschede weather forecast API and outputs a concrete irrigation recommendation. 'No irrigation needed' when the soil is wet, 'We suggest 88.1% irrigation' when it is dry.

- Full sensor to serial to Processing to weather API to irrigation recommendation pipeline shipped end to end

- Dashboard fuses live soil moisture readings with a 3 day Enschede rainfall forecast into a single actionable recommendation

An Arduino class low power bike theft detection module that fuses HC-SR04 ultrasonic radar (0.2 to 4.0 m scan), capacitive touch sensing and IMU motion detection into a single tamper vs passing by classifier, then fires BLE alerts to a paired phone in 200 to 500 ms. 2 to 4 days of battery life, false positive rate under 10 percent.

- Ultrasonic radar with 0.2 to 4.0 m scan range fused with capacitive touch sensing and IMU based motion detection on an Arduino class MCU

- BLE alerts to a paired phone in 200 to 500 ms with under 10 percent false positives through threshold tuning, temporal filtering and multi sensor fusion

Classic snake on an HTML5 canvas. Score tracking, high scores, smooth 60 fps render loop, arrow keys or WASD. Plays right in the grid or in the /playground sandbox.

- Zero dependencies — pure HTML5 canvas + vanilla JS

- Local-storage high-score persistence, arrow keys and WASD both supported

Interactive world map for exploring specialty coffee origins. Flavor profiles, altitude data, tasting notes, favourites and side-by-side region comparison. D3-geo on a modern ES module stack.

- D3-geo world projection with per-country hover and zoom

- Region comparison view with side-by-side tasting notes and altitude bands

Mobile-first workout tracker. Exercise library, set logging, progress charts through Chart.js and CSV export. Backed by Node + EJS + SQLite on the server side.

- Set-by-set logging with an exercise library of common lifts

- Chart.js progress graphs over time, CSV export for long-term analysis

Freelancer marketplace where buyers post projects, freelancers bid, and both sides manage milestones and reviews. Full session-based auth on Node + Express + SQLite.

- Two-sided marketplace: project postings, bids, milestones, reviews

- Session-based auth with server-side rendering, no SPA bundle

Full course catalog with lessons, quizzes, progress tracking, certificates and user reviews. CRUD admin panel. Built end-to-end as a claude-crew orchestration demo.

- Course catalog with lessons, quizzes, progress tracking, certificates

- Full CRUD admin panel — create courses, manage reviews, monitor enrollments

Business management suite for a single SMB owner — team and employee management, inventory, invoicing, purchase orders, suppliers and an analytics dashboard in one place.

- Six first-class surfaces: team, inventory, invoices, POs, suppliers, analytics

- Single-tenant admin dashboard as the landing surface, not a separate app

Matrix rain, a 3D rotating coin with faces, and bouncing-ball physics — originally Python curses, ported to HTML5 canvas so they run in the browser.

- Matrix rain, 3D rotating coin with faces, bouncing-ball physics

- Originally Python curses; ported to canvas without losing the terminal aesthetic

Shipped Job Agent, end to end LinkedIn auto apply

Personal Project

Jan 2026 – Mar 2026

A 3 tier auto apply system that tries DOM parsing first, then API interception for React SPAs, then Claude Vision as a fallback. On a March 2026 batch test it landed 82% success across 11 ATS platforms and I validated real LinkedIn Easy Apply end to end. Streamlit UI on top with a Folium map of NL job locations.

MSc Data Science and AI, applications in progress

Groningen (active) · TU/e (appeal filed) · TU Delft (rejected)

Jan 2026 – Present

Applying for Masters programs in Data Science and AI at a few Dutch universities. Groningen is currently active, TU/e is under appeal after an initial reject, TU Delft said no. The places I want to land are the ones leaning into multi agent systems, edge AI and applied optimisation, which lines up with the VDB thesis and the LeadOrch and Jarvis work I am already doing day to day.

Built Jarvis, a local first personal AI OS

Personal Project

Dec 2025 – Present

11 Python packages, 35 MCP tools, 13 skills and a daemon that runs 12 scheduled jobs on an Apple M3. Everything is local by default through Ollama, so nothing leaves the laptop unless I explicitly allow it. There is a 4 layer Personal Knowledge Core on SQLite plus FTS5 with confidence gated writes, and Routing V2 classifies every request into one of six task classes and picks the model and PKC policy per class.

Launched LeadOrch, a B2B lead gen SaaS on Cloudflare

Personal Project / KVK 94668388

Nov 2025 – Present

Shipped a live B2B SaaS at leadorch.io on top of Cloudflare Workers, Workflows and Durable Objects, with Stripe live mode payments billed through a Dutch sole proprietorship. A 15 stage AI pipeline runs per customer request and talks to 11 third party services. I also migrated the whole stack end to end from Python and FastAPI to TypeScript on the edge in March 2026 so it could hibernate properly and run closer to the user.

VDB Thesis, AI enhanced facility layout (BSc capstone)

University of Twente × Van den Bos CM × FIP-AM@UT

Feb 2025 – Jul 2025

4th year Creative Technology capstone done with Van den Bos CM and the Fraunhofer Innovation Platform. Hybrid NSGA-II pipeline plus a two tier surrogate and a SimPy discrete event simulator, all optimising conveyor length and routing efficiency on a real factory layout. Layout B came in at 20.6% less conveyor and 15% more throughput against the VDB baseline, out of a final Pareto front of 38 feasible solutions.

HiFi HCI, bouldering haptic coach

University of Twente × Mad Rock Gym (Y3)

Nov 2024 – Jan 2025

6 person HiFi HCI build that we actually demoed live at a climbing gym. A laptop watches the wall and the climber on webcam, fuses OpenCV hold detection with MediaPipe Pose, and fires a vibration on the next to move limb across four ESP8266 armbands over cross family ESP-NOW. User testing taught us to hint and not solve, and the system was built around that.

Smart Bike Fender, portable anti theft lamp (Y3 M7)

University of Twente, Module 7

Feb 2024 – Nov 2024

7 person minor reframed around the Dutch bike theft reality. The MCU, Li-Po, NeoPixel strip and IMU all live in a detachable brain that slides onto a magnet plus pogo pin rail, so the cheap passive mount is the only thing that stays on the bike. Car style blinkers, a brake flash that intensifies on deceleration and IMU driven auto brake detection.

BME IMU knee flexion sensor (Module 8 resit)

University of Twente, Biomedical Signals and Systems

Feb 2024 – Jun 2024

Two IMU wearable that streams accelerometer and gyroscope data from tibia and femur, fuses it through a complementary filter at α = 0.98 and plots it live in Python. Three fusion strategies kept side by side so the trade offs are visible. This one later seeded the running injury prevention feedback system.

Webcam aimed zombie FPS (Y2 M6)

University of Twente, AI and Programming

Nov 2022 – Jan 2023

3D first person shooter in Ursina and Panda3D where you aim with your hand over webcam instead of a mouse. The cvzone hand detector tracks the index fingertip for aim, fire and reload, and a custom Panda3D CollisionRay maps the screen space cursor to a 3D world hit. Pair build with Marro64.

Smart Tech arena robot, 2nd prize hackathon

University of Twente, Module 5

Sep 2022 – Nov 2022

2nd place at the UT Smart Technology hackathon. Per motor PD control at 20 Hz on an Arduino Motor Shield, with hand rolled pin change interrupts for quadrature encoder B. On robot AM/FM demodulation of an ultrasonic beacon decoded the correct exit in a multi exit arena.

Food Pillar, Dutch Innovation Days 2022

UT Creative Technology × Dutch Innovation Days

Feb 2022 – Apr 2022

5 person Living and Working Tomorrow installation exhibited publicly at Dutch Innovation Days in Enschede. A Kinect hidden inside the table watches whether visitors look down at a fake phone or up at each other, and a ceiling projector only paints food onto their plates while they keep talking. Real time face detection via Haar cascade in Processing.

Smart Bike Theft Defense (first UT semester)

University of Twente

Sep 2021 – Nov 2021

First semester Arduino project. Ultrasonic radar plus capacitive touch plus IMU motion all fused into a tamper vs passing by classifier, and it fires a BLE alert to a paired phone in 200 to 500 ms. 2 to 4 days of battery life with under 10% false positives. The Smart Bike Fender three years later reused a lot of this design.

BSc Creative Technology

University of Twente

Sep 2021 – Jul 2025

Four year program at UT that mashes electronics, embedded systems, human centred design and full stack software into one degree. The module projects covered a PID hackathon robot, a Smart Tech arena robot, a Kinect driven Dutch Innovation Days installation, Biomedical Signals and Systems, the HiFi HCI bouldering haptic coach in Y3 and the VDB facility layout thesis with industry in Y4. I graduated leaning hard toward the projects that actually had to run in front of a real user.

Technology Stack

Tools, frameworks and platforms I actually build with. Each one is rated by how deep I have gone on it in real projects, not by how many tutorials I watched.

Yigit Bezek

$ whoami

> creative_technologist

> ai_ml_engineer

> full_stack_developer

> systems_architect

System Profile

I studied Creative Technology at UT in Enschede and finished the bachelor in 2025. Right now I am applying for a Masters in Data Science and AI at Groningen and TU/e while building a live SaaS called LeadOrch on the side. My BSc thesis was an NSGA-II genetic algorithm on top of a discrete event simulator for a real factory layout, which is where most of my optimisation and applied AI chops come from.

Shrinking a 10⁹⁰ search space: NSGA-II for factory layout with a SimPy twin

BSc thesis, UT × Van den Bos CM × FIP-AM. 9 min read · PDF attached.

The work I pick up ends up being a mix of AI/ML systems, web products and small hardware things. I like projects where the whole stack has to agree with itself. Factory layout optimisation talks to a simulator, a climbing wall talks to four armbands over ESP-NOW, a lead gen pipeline talks to eleven APIs on Cloudflare Workers. None of this is neat on paper so I care a lot about actually shipping.

I do most of my thinking at the system level and most of my fun at the component level. So the work tends to go deep on both sides. Happy to talk about any of it if something here looks interesting.

Say Hi

If you have a role you think I would fit, a project that needs another pair of hands, or you just want to trade notes on something you are building, drop a message. I read everything and I try to reply within a day or two.

Direct Line

bezekyigit0@gmail.comCurrently available for opportunities